Strap yourselves in friends because I’m about to take you on a wild ride through bird filled soundscapes, to the struggles of coding hills, and past the glorious fieldwork hills of yonder.

First I should address that it’s been almost 3 months since my last blog post on January 4th, I’ve been at conferences, on field work, frantically coding, and many more things that have seen my take a brief break from blog writing. But that’s ok, I’m back now!

SO ANYWAY, I was trying to think of what to write about this fortnight and it came to mind that I actually haven’t really gone into what I am researching for my masters. In short I am developing automated bioacoustics methods to monitor south-eastern Australian parrots, but really that’s a sentence full of jargon so let me break it down a little better for you.

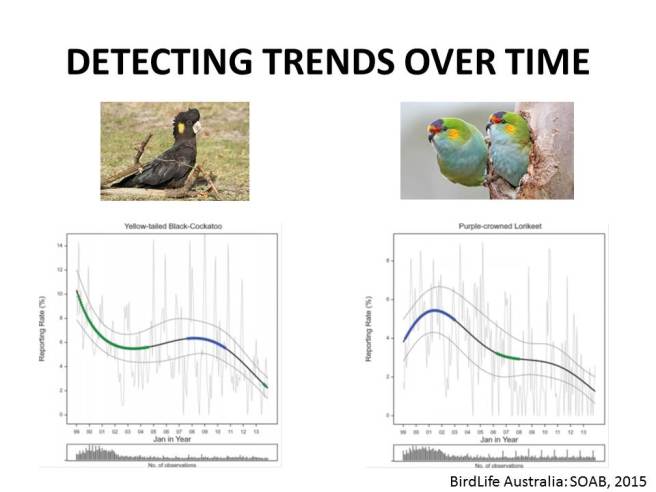

As per an earlier blog post of mine (*ahem* here) monitoring species for conservation is important and valuable, and there are emerging technologies that allow for improved monitoring outcomes. My research aims to explore three algorithms that will generate species specific call templates, that is to say, they combine a set of pre-identified recordings of an animal and use the input to identify the target species within field recordings. Each algorithm matches the template to the field recording in a slightly different way, so I won’t go into that in this blog post, but that’s not a bad idea for a future post. In any case, as I already said, I have been exploring three of these automated recognition algorithms to work out how precise they are, and how likely they are to detect the target species if it is present by testing them across 22 species of parrots found in south-eastern Australia. Next up is altering the output a little to make the recognisers more specific (won’t detect the wrong species as the target species as often, but may miss detections of the species), or more sensitive (won’t miss any detections of the target species, but may identify the wrong species as the target species) and analysing the benefits and costs of each approach.

Making sense so far? I sure hope so because I can’t live update this content to reflect the level of understanding anyone currently has. What am I saying? Get back on track Kate…

So back in my blog post about monitoring I did explain briefly why bioacoustics is good and what I was planning to do, but that was almost a year ago and by now I have all of my field data from various field trips, have analysed a sizable chunk of it, and I have built some preliminary recognisers using R-studio. Basically at this stage we’ve found out that we can actually make these automated recognisers for parrots, and I have had a lot of success making templates for Gang-gang Cockatoos.

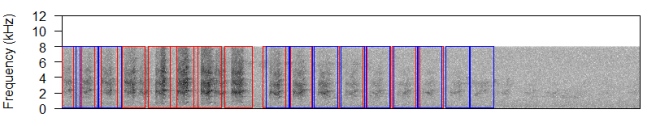

In the above photo you can see spectrogram output showing Gang-gang Cockatoo calls fading in and out as the bird flies over the automated recording unit. The stronger calls are outlined in red, the weaker in blue with a decent margin of cross over where the mid-range calls are outlined in both red and blue. Later in the recording a warbler vocalisation is present but not identified by the templates as a Gang-gang call, suggesting that these first build recognition methods are working quite well at detecting the targeted species with some level of accuracy.

Next on the cards is further tests for accuracy and so forth, as well as building more sophisticated templates and testing them over less ‘clean’ field recordings. Anyway, that’s all for this fortnight, next fortnight I will either give you guys another species profile or I’ll go into which recognition algorithms I’m using and roughly how they work.